One of the things that always intrigues me is to look back at how a technology has evolved. Building programs to communicate with computers is something that has a particularly interesting history. Ada Lovelace is recognized as having written the first program to calculate Bernoulli numbers using Charles Babbage’s Analytical Engine back in the mid-nineteenth century. Later in the following century came coding languages for lambda calculus and Turing machines making tape-based computations. Then assembly language, FORTRAN, LISP, COBOL, BASIC, C, and SQL came along with more general purpose and specialized programming languages leading up to the twenty-first century. Fast forward to today, and we have Python, Java, Rust, Julia, Swift, and a whole host of programming languages designed for humans to communicate with computers to achieve a specific outcome.

While programming languages have evolved tremendously, at their core they all still have one major thing in common: having a computer accomplish a goal in the most efficient and error-free way possible. Modern languages have made development easier in many ways, but not a lot has changed in how we actually inspect the individual lines of code to make them error free. And even less has been done to keep your when it comes to improving code quality that improves performance and reduces operational cost.

Where build and release schedules once slowed down the time it took developers to ship new features, the cloud has turbo charged this process by providing a step function increase in speed to build, test, and deploy code. New features are now delivered in hours (instead of months or years) and are in the hands of end users as soon as they are ready. Much of this is made possible through a new paradigm in how IT and software development teams collaboratively interact and build best practices: DevOps.

Although DevOps technology has evolved dramatically over the last 5 years, it is still challenging. Issues related to concurrency, security or handling of sensitive information require expert evaluation and often slip through existing mechanisms like peer code reviews and unit testing. Even for organizations that can invest in developers who are expert code reviewers, the pace software developed at creates complex and high volumes of code that are difficult to review manually. The sampling is always random, and there is no assurance that this manual process picks up all—or even most—of the relevant bugs or performance issues. This ultimately results in an increase in technical debt and bad customer experiences.

Build and operate with a machine learning powered guru

Recognizing these challenges, AWS is on a journey to revolutionize DevOps using the latest technologies. We are starting to treat DevOps, and the toolchains around it, as a data science problem – And when we think of it this way, code, logs, and application metrics are all data that we can optimize with machine learning (ML).

Code reviews are one example and are important to improve software quality, software security, and increase knowledge transfer in the teams working with critical code bases. Amazon CodeGuru Reviewer—launched in general availability in June 2020— uses ML and automated reasoning to automatically identify critical issues, security vulnerabilities, and hard-to-find bugs during application development.

CodeGuru Reviewer also provides recommendations to developers on how to fix issues to improve code quality and dramatically reduce the time it takes to fix bugs before they reach customer-facing applications and result in a bad experience. To make using it easy, there is no change to a developer’s normal code review process—teams can simply add CodeGuru to their existing development pipeline to save time and reduce the cost and burden of having bad code. These tools are enabling customers to be more agile, innovate faster, and deliver the best possible experiences for their customers. Developers can actually focus on what they love doing—inventing and building. Since its launch, Reviewer has scanned over 200M lines of code and produced 165,000 recommendations on fixes for developers. In fact, over 25,000 Amazon developers use this daily to support their code review processes.

The science at work, providing valuable insights for your code.

So what’s really happening under the hood? At a high level, CodeGuru Reviewer is trained on decades of best practices and experience from AWS and Amazon. We use ML and automated reasoning as it allows customers to automate the lessons of millions of code reviews we have done ourselves across thousands of open-source and Amazon repositories. CodeGuru Reviewer leverages ML for multiple purposes, including modeling best practices by analyzing changes to existing code or inferring incomplete information and avoid resource leaks, to name a few. As a service, Reviewer is faster than any manual code review process, and can scan 1M lines of code in 30 mins, saving significant time in your code development processes. Best of all, it provides recommendations on how to fix the issues it finds.

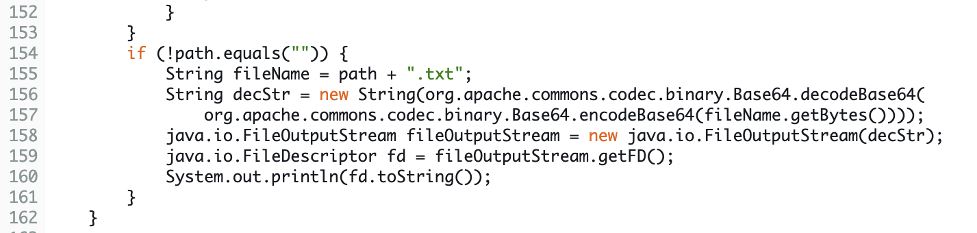

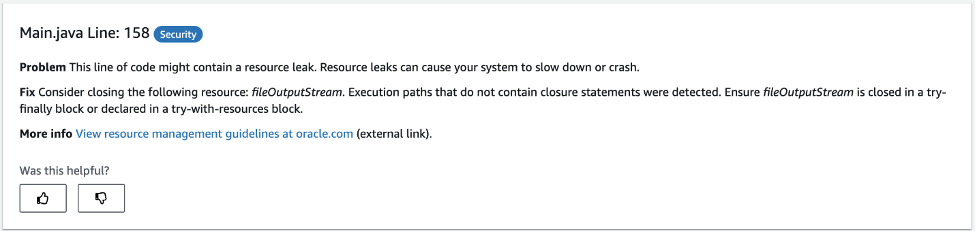

In the example below the code analyzed by Reviewer does not need to contain all the dependencies to identify the missing code and it uses ML to make appropriate decisions on where a resource leak might occur. CodeGuru combines static analysis and ML to accurately alert the developer of a bug, and reduce false positives. False positives occur frequently when using static code analysis alone, as the analysis is often built on incomplete information (only the source files and not the external dependencies in the code base). The resource leak detector in CodeGuru Reviewer combines static code analysis with an ML model, which is used to reason about external dependencies and provide more accurate recommendations.

This is one of the ways CodeGuru reviewer works to help developers automate the review process. It can also detect security vulnerabilities, syntax errors and other costly bugs before you deploy your code to production.

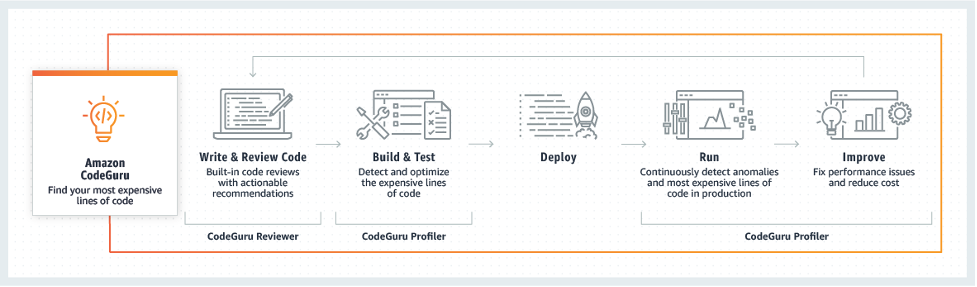

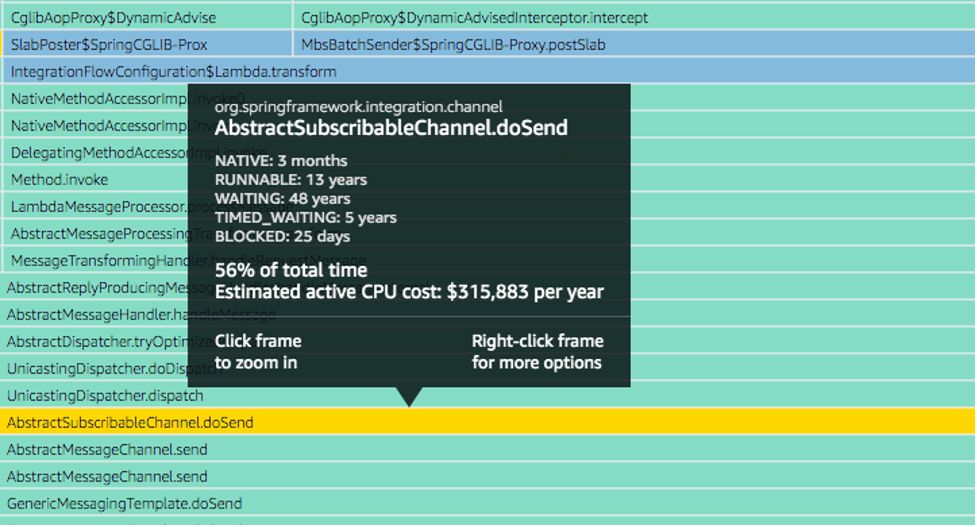

Find your applications most expensive lines of code

Code reviews are only one part of the DevOps story. Another aspect of best practices is application profiling in test and production. Troubleshooting performance issues, discovering and pinpointing anomalies, and catching lines of code that are unnecessarily burning compute resources can be a highly manual and time-consuming process. Traditional application profiling also misses hard-to-find chunks of code that are causing issues yet to be discovered. CodeGuru Profiler complements automated code reviews with CodeGuru Reviewer by helping developers understand the runtime behavior of their applications, identify and remove code inefficiencies, improve performance, and decrease compute costs. In short, CodeGuru Profiler helps you identify your most expensive lines of code. Amazon’s internal teams have used Amazon CodeGuru Profiler on more than 30,000 production applications, resulting in tens of millions of dollars in savings on compute and infrastructure costs.

Our goal with CodeGuru was to move beyond the traditional approach of manual code reviews and application profiling to usher in a new way of thinking about DevOps by taking advantage of ML. CodeGuru helps developers be proactive, not reactive, learn by utilizing the continuous feedback loop powered by insights powered by ML, and ultimately, be better and more efficient coders. This enables developers to better maintain high-quality customer experiences by reducing deployment risks and facilitating faster delivery of new features.

As with all AWS services, we are continuously improving CodeGuru and we have been working hard at broadening the language support and deepening the recommendations provided to developers. We also recently introduced an entirely new pricing structure to help customers budget for and scale code reviews based on project size and deployment schedules. And there are many more exciting updates to come in the near future. I encourage you to check out the latest at aws.amazon.com/codeguru.

Extending the capabilities for a new era of DevOps

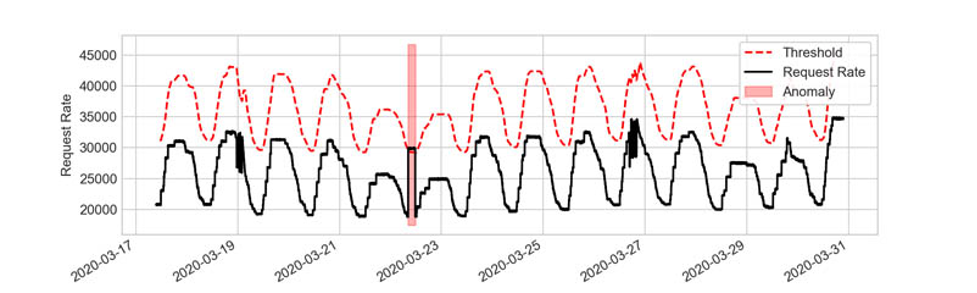

With CodeGuru, we have gone deep into the automation of code reviews and application profiling, but there’s more we’re doing to transform DevOps. Another service we’ve recently launched is Amazon DevOps Guru. DevOps Guru operates at the infrastructure level to further enhance and automate DevOps. The service analyzes data like application metrics, logs, events, and traces to establish baseline operational behavior and then uses ML to detect anomalies. The great thing about DevOps Guru is that the baseline for established behavior is not static and it is able to identify failure in metrics that are substantially different from each other but combined together end up impacting the availability and performance of an application (for example latency and incoming requests). The service uses pre-trained ML models that are able to identify spikes in application requests, something you might expect because of an event like we see with Amazon Prime Day. It does this by having the ability to correlate and group metrics together to understand the relationships between those metrics, so it knows when to alert and when not to.

I know from experience the frustration of being paged at 2 AM in the morning for a potential application issue, only to find out it’s a false alarm. It doesn’t have to be this way, and DevOps Guru is one way we’re helping solve for that—reducing false alarms, identifying issues with high-confidence alerts, then providing recommendations on how to fix the issues before they become high-severity outages with costly downtime and bad customer experiences.

One of the great things about DevOps Guru—like CodeGuru—is that it doesn’t require ML expertise. It will provide recommendations, even including links to documentation to fix issues, without the need for deep domain expertise.

Next Generation DevOps

With CodeGuru and DevOps Guru, we are excited about this new era of DevOps and the possibilities that augmenting developer’s expertise with ML capabilities will bring. We’re seeing customers of all types start to make use of these technologies to go and build with more confidence than ever before. Confidence that applications are secure, performant and error free. With DevOps tools powered by ML, customers will have more time to innovate and deliver great experiences for their customers.

Applying ML to DevOps is going to be a game changer. And we’re just getting started.